How to Score Interviews Consistently: A Structured Evaluation Guide for Hiring Teams

Most hiring teams know structured interviews are best practice. 1998 research (revisited in 2021) by Professor Paul Sackett and colleagues at the University of Minnesota found that structured interviews are a top-ranked indicator of job performance over many common hiring methods.

The problem isn’t understanding structured interviews, it’s what happens after a real interview ends.

Evaluation, not interviewing, is where structured hiring breaks down.

Interviewers take inconsistent notes. Feedback reads “seems like a good cultural fit.” Hiring managers weigh criteria differently. Candidates are compared on impressions rather than evidence. And by the time a panel convenes to make a decision, nobody remembers exactly what anyone said.

This guide walks through how to build a structured interview evaluation process that holds up in practice, and how modern video interview platforms, scoring rubrics, and AI-powered summaries make that consistency scalable across your entire hiring team.

What is a Structured Interview?

A structured interview is one in which every candidate answers the same predetermined questions, evaluated against the same standardized criteria. It is a deliberate counterweight to the natural human tendency to improvise, chat, and make snap judgments.

Contrast that with an unstructured interview, where questions vary by interviewer, follow-up is driven by gut instinct, and evaluations are largely informal. The difference matters far more than most hiring teams realize.

| Structured Interview | Unstructured Interview | |

|---|---|---|

| Questions | Same for every candidate | Vary by interviewer and conversation |

| Evaluation | Standardized rubric with scored criteria | Informal notes and general impressions |

| Candidate comparison | Objective, side-by-side | Difficult — apples to oranges |

| Bias exposure | Significantly reduced | High; cognitive biases run unchecked |

| Legal defensibility | Strong documentation trail | Vulnerable |

| Predictive validity | .51 (Schmidt and Hunter) | Significantly Lower |

The predictive validity gap is meaningful. In hiring, even small improvements in prediction translate into significant business outcomes: fewer bad hires, lower turnover, and stronger team performance. The average cost per hire in the U.S. now sits at around $4,700, and failed hires cost substantially more when onboarding, lost productivity, and replacement costs are factored in.

Why Structured Interview Evaluations Matter

Structured evaluation does more than keep your process tidy. It directly affects who gets hired and why, leading to:

- Fairer Hiring Decisions: Structured scoring applies the same lens to every candidate, which actively reduces room for unconscious bias.

- Stronger Candidate Comparison: When all candidates answer the same structured interview questions and are scored on the same dimensions, comparison is genuinely meaningful. Without that common baseline, hiring teams are not really comparing candidates. They are comparing whoever made the strongest impression on a given day.

- Faster Decisions: Standardized scoring data gives hiring teams something concrete to work from. Instead of relitigating impressions in a debrief, they are reviewing scored rubrics and documented notes. Research on hiring timelines shows that process speed is increasingly a competitive advantage: 42% of candidates drop out when scheduling and decision-making drag.

- Better Documentation: Structured evaluations create a defensible paper trail. In regulated industries like healthcare, government, and education, that documentation is essential for compliance and audit readiness.

- A Better Candidate Experience: This one often gets overlooked. When candidates know they will be evaluated on consistent, job-relevant criteria, the process feels fairer. 66% of candidates say a positive interview experience directly influenced their decision to accept an offer.

The Real Problem: Structured Interviews Often Become Unstructured During Evaluation

You can design a perfectly structured interview—identical questions, behavioral anchors, job-relevant criteria—and still end up with an unstructured evaluation if you do not have a system for capturing and scoring responses consistently.

This happens in a few predictable ways:

- Interviewers Take Inconsistent Notes: One interviewer writes three pages of observations. Another writes, “strong communicator — liked her.” The information asymmetry makes a fair comparison impossible.

- Memory Degrades Fast: When hiring teams review candidates three or four days after interviews, they are not working from accurate recall. They are working from whatever impression stuck (another reason why recorded video interviews work in your favor). Memory of specific interview content fades quickly, especially when interviewers are seeing multiple candidates in a compressed window.

- Group Discussion Introduces Conformity Bias: When teams discuss candidates together before scoring independently, dominant voices shape consensus. What feels like a collective judgment is often one person’s opinion with agreement layered on top.

- Interviewers Weigh Criteria Differently: Without explicit scoring guidance, “communication skills” means something different to every evaluator. One interviewer values conciseness, another values warmth, and a third values technical vocabulary. The same candidate gets a 4, a 2, and a 5.

Even when the interview itself is well-structured, evaluation without a consistent system reintroduces the subjectivity you set out to eliminate.

How to Build a Structured Interview Evaluation Process

Step 1: Ask Every Candidate the Same Questions

This sounds obvious, but it breaks down constantly in practice. Interviewers go off-script, follow interesting tangents, or adjust structured interview questions based on what a candidate says. Every deviation creates a comparison problem.

One effective way to enforce question consistency at scale is through a one-way video interview. In a one-way interview, also called an on-demand interview or on-demand video interview, every candidate records responses to the same questions in the same format. There is no opportunity for interviewer improvisation. The interview becomes a standardized artifact that multiple reviewers can evaluate independently, rather than a free-form conversation that exists only in someone’s memory.

This format has grown significantly in adoption because it solves a structural problem that scheduling alone cannot: it separates the act of interviewing from the act of evaluating, which is where most structured processes actually fall apart.

Step 2: Use a Standardized Evaluation Rubric

A scoring rubric translates the question of “how did they do?” into something measurable. Rather than overall impressions, evaluators score candidates on specific dimensions relevant to the role. A solid interview evaluation rubric covers (depending on the needs of the role):

- Relevant Educational Background: Does their training align with what the role requires?

- Related Work Experience: Have they done work that is meaningfully similar?

- Verbal Communication: Are they clear, organized, and articulate in how they present their thinking?

- Learning Ability: Do they demonstrate adaptability, curiosity, or the capacity to develop skills quickly?

- Attitude Toward the Position: Is their motivation for this specific role genuine and grounded?

- Professional Demeanor: Do they present themselves in a way appropriate to the role and organization?

Each dimension gets a numeric score with defined anchors for each rating level, so a “3” means the same thing to every evaluator, plus space for specific notes. The rubric should be built before interviews begin, not reverse-engineered from who you already like.

Step 3: Score Candidates Independently Before Discussing

This is where most hiring teams leave significant value on the table. When interviewers discuss candidates before submitting individual scores, group dynamics take over. The first person to speak anchors the conversation. The most senior person in the room has disproportionate influence. Individual evaluation gets replaced by social consensus.

Every reviewer completes their structured interview scoring independently, and then the group compares notes. Differences in scores become useful data. They surface disagreements worth examining rather than just averaging away. Multiple independent evaluators also provide protection against any single reviewer’s blind spots or biases.

Step 4: Compare Candidates Using Structured Data

Once you have standardized scores across consistent criteria, candidate comparison becomes genuinely useful. You can look at how candidates scored on communication, learning ability, or relevant experience, not just on overall impression. This kind of dimension-level comparison is especially valuable when deciding between two strong finalists who performed differently across areas.

Modern video interview platforms make this comparison direct. Reviewers can watch candidates answer the same question side-by-side, then score against the same rubric within the same interface. This question-by-question comparison is fundamentally different from trying to remember how Candidate A answered a question after watching Candidates B through F.

How Video Interview Platforms Enable Structured Evaluation

The challenge with paper-based structured interview systems is that they are only as consistent as the humans administering them. Video interview software bakes structure into the process at the platform level, which is a meaningful shift.

- Standardized Interview Formats: Hiring teams build a requisition, attach the interview questions, and every candidate completes the same interview using the same format and time limits. There is no path for one candidate to receive follow-up prompts that another did not.

- Consistent Candidate Comparison: With video recruitment tools that support question-level review, recruiters can watch candidates answer the same question back-to-back and score each response in context before moving to the next. This is the structural advantage of digital interview formats over live panel interviews: the comparison is built into the workflow rather than reconstructed from memory afterward.

- Customizable Evaluation Rubrics: Good video interview software allows teams to configure the exact dimensions they want to score, set numeric weighting, capture star-based question-level scores, and collect free-form interviewer notes, all in one place and tied to the candidate record. Out-of-the-box frameworks work for general hiring; fully customizable rubrics serve teams with role-specific or compliance-driven requirements.

- Asynchronous Review: Multiple reviewers can evaluate candidates on their own schedules, independently, before a panel discussion. This removes scheduling friction and preserves individual judgment, which is particularly valuable for distributed hiring teams or high-volume video screening workflows.

This is also where one-way interview questions earn their place in the process. Because candidates record responses asynchronously, reviewers can focus entirely on evaluation rather than splitting their attention between listening, note-taking, and managing conversation flow.

How AI Interview Summaries Support Structured Evaluation (Without Replacing It)

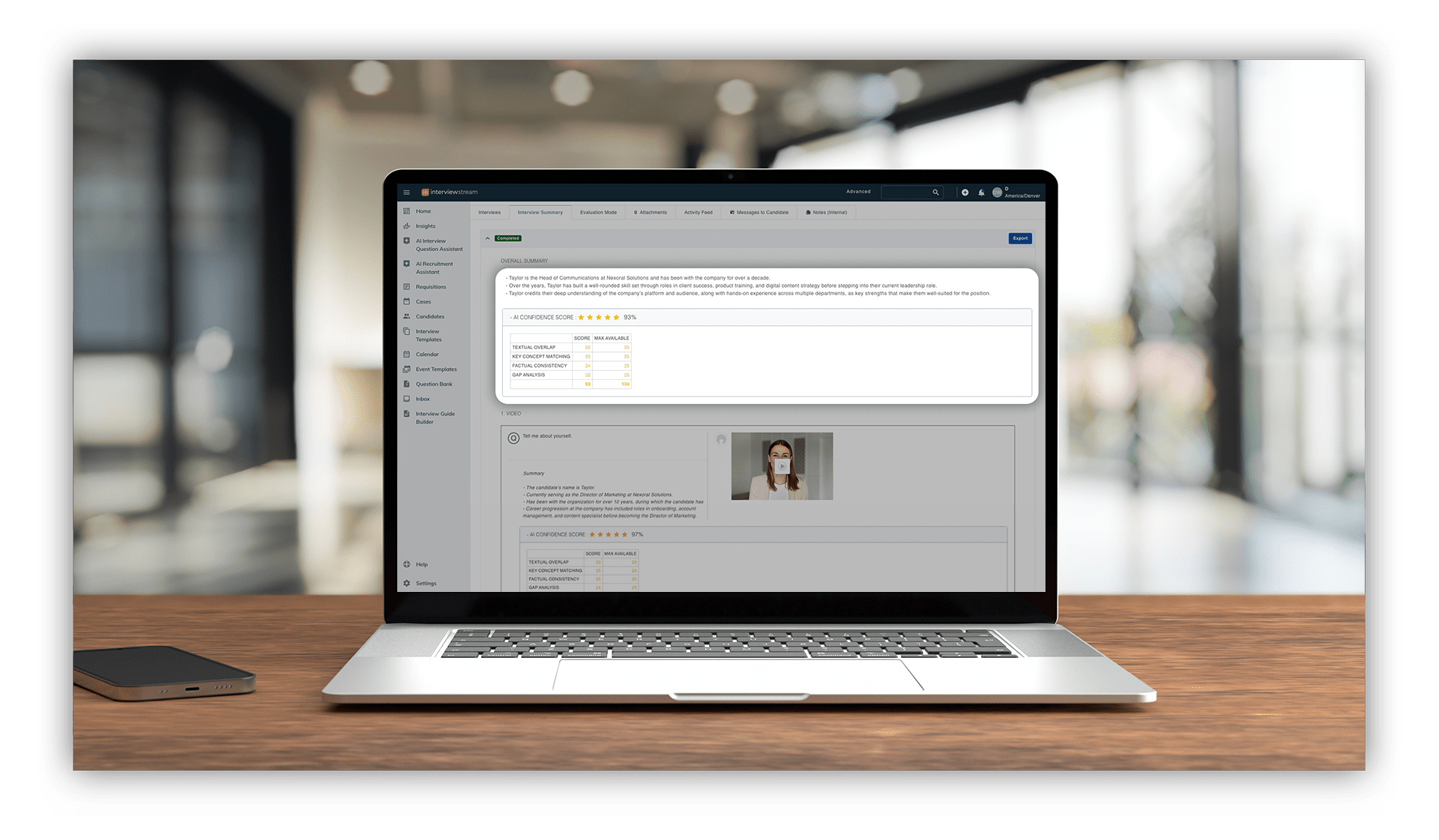

High-volume hiring is another breaking point in consistent evaluation. The more candidates in a pipeline, the harder it is to give each one genuine attention. AI interview summaries change that equation by giving reviewers a fast, accurate orientation to each candidate before they watch the recording, or when revisiting a candidate interview.

- Interview Summaries: After each completed video interview, AI generates a structured summary covering overall interview highlights, key themes from the candidate’s responses, and question-by-question callouts. Reviewers get a fast orientation to what a candidate said before watching the full recording. For initial video screening, this makes it practical to give every candidate meaningful attention even in a high-volume pipeline.

The important framing: AI summaries are a navigation tool, not a decision tool. They tell reviewers where to look, not what to conclude.

- Confidence Scores: This is the feature that separates AI-assisted evaluation from AI-driven evaluation, and it matters. Confidence scores measure the alignment between the AI-generated summary and the actual interview transcript. They appear at both the overall interview level and the individual question level, so you have confidence in the accuracy of the interview notes you’ve received.

By the numbers: Workable’s 2024 AI in Hiring survey of 950 hiring managers found that 85.3% of professionals observed AI increasing efficiency in their hiring process, with 77.9% also reporting cost reductions as a direct result of AI integration.

Download: Structured Interview Evaluation Rubric Template

To make this practical, we have put together a ready-to-use evaluation rubric template you can adapt for your team. It includes:

- Scoring categories for education, experience, communication, learning ability, attitude, and professionalism

- Numeric anchor descriptions for each rating level, so your entire team is calibrated to the same standard

- Space for question-level notes and an overall score

- A simple comparison summary for panel review

Download the Evaluation Rubric

Why Structured Evaluations Are Table Stakes for Modern Hiring

Recruiters today are making more decisions, faster, with more people weighing in and less time to get it right.

That is a recipe for evaluation inconsistency, even when the interviews themselves are well-designed. The structure built into the interview format falls apart if evaluation is left to individual judgment, informal notes, and memory.

Structured evaluations close that gap. They are the mechanism by which the consistency designed into your interview questions actually carries through to your hiring decisions.

The technology to run structured evaluations at scale already exists. Video interview platforms standardize the interview itself. Configurable rubrics standardize how responses are scored. Independent reviewer workflows eliminate group conformity bias before it starts. AI-powered summaries make it practical for busy hiring teams to give every candidate the careful review they deserve.

The organizations that will win the hiring competition over the next few years are not necessarily the ones with the biggest recruiting budgets. They are the ones with the most consistent, efficient, and fair evaluation processes. Structured evaluation is how you get there.

——

Want to see how interviewstream’s video interview software and AI interview summaries support structured evaluation at scale?

Learn More:

About The Author

Drew Whitehurst is the Director of Marketing, RevOps, and Product Strategy at interviewstream. He's been with the company since 2014 working in client services and marketing. He is an analytical thinker, coffee enthusiast, and hobbyist at heart.